And then there where two…

Ultimately, Omnia, based on the IP assigned was a technology and VR product innovation company. Milk wanted to focus on VR content, and the Omnia founders felt the best use of development funds (and investment) was not in making movies, and not creating one-off “professional” VR cameras (given the current video/film camera market – from a unit sales perspective – can be counted in the tens of thousands) for a founder’s own use. Milk is doing great work at his company Here be Dragons, Omnia’s focus is on what it believes is the future of “mass VR content”, through enabling the general consumer to use “point and click” 3D sphere-video cameras to produce and share millions of videos a year in “reality VR” versus the handful of short 360 films and 3D video games released annually, the long-term capacity issue for those related industries, and thus the constraint in mass consumer adoption of VR headset purchases.

Client:

Omnicam

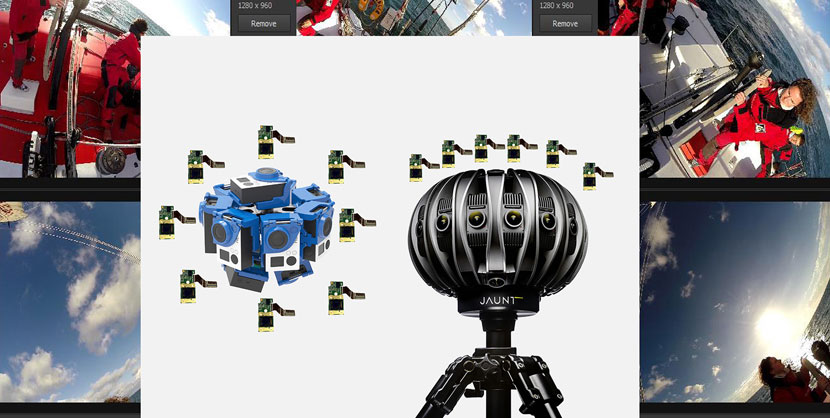

While working with Oculus Rift, Jaunt, and others through a Radical Media “VR project” it was obvious the fledgling industry had become so hyper-competitive and thus myopic with a hyper-focus on owning augmented technology rather than rethinking its own technology. Having started out in VR 1.0 in the Bay Area in the 90’s, EGG MP Moulton understood the major limitations (cost/tech) in 1.0 and specifically what component cost reductions allowed for “Frankensteined” bolt-on dev for companies in 2.0, such as Oculus Rift to provide pre-market display kits for only a few hundred dollars. Moulton had patented a separate soundwave recording device several years earlier after working on a sonogram “fix” development project, noticing that much of the “noise” being picked up by users was not random but other types of waves (light waves). At the time, designing a single “macro” omniphone to detect and record both sound and image in one stream, a wave recorder, was feasible, but far from being financially viable. Moulton named that device the Omniaphone. However, when looking at how companies like Jaunt were putting together multiple cameras, as GoPro had done earlier, and calling them “VR cameras”, it was obvious the missing element for “reality” as both 3D and a single undistorted sphere (versus 360 view), with multiple visual panels that had to be stitched together (creating nausea with viewing Users), Moulton realized creating a single 3D sphere recording device would make professional (feature length movie) production viable but also open up the doors for creating mass, high-volume content, quickly enough to compel a mainstream buyer to purchase a HMD and truly mainstream the space.

The issue with head-mounted displays for VR was not price/cost with a mainstream user, but simple, outside of a handful of videogames, there was no significant viewable content to consume, there are only so many times one can watch a 30 second 360 commercial or 2 minute 360 movie. And, outside of videogames, the dirty secret in film was that 360 video was not even VR, replicating reality required recording a sphere of video and audio to replicate the world, but also a 3D sphere, and no 360 had been produced in either 3D nor 360. And, based on the amount of post-editing time required for a VR film, a feature film done in (360) VR would require approximately 10 years to complete.

So if there was an issue with a lack of mass content production in VR for continually fresh supply to compel mainstream users to purchase HMDs to consume media, and 3D video games were already being produced at capacity (8–10 new units in the best of years), and with no real feature VR “film” prospects in the foreseeable future, what else could create demand? The only other type of media consumed in high-volume was (personal) reality video, the best RealTV being exceptionally viral and consumable, but more importantly, a pre-existing “market” with hundreds of thousands (vs dozens) of content producers, and millions of current weekly consumers.

One didn’t need training as a director to produce content, simple a camera and an interesting angle, or simply the bravery to get in the middle of conflict, or to share embarrassing, funny, or exciting personal and social experiences. And looking at the market numbers———>

To us humans, looking at one field at a time, but hearing sound in 3D, we assume the horizon is flat, and view stories on a stage against a wall. Reality, even when we don’t see and hear it all at once, is around us in a sphere, and activities are occurring all around us, whether or not we are paying attention…

* Dirty VR industry secret #21: even automated stitching leaves visual scars. We may not see them consciously when moving our head around in an HMD, but they have so much more data at the seams than normal visual field data, and that our visual cortex is tasked with hyper-processing every time we cross over them visually. It’s like a speed bump for our visual cortex. The “minority” of people who are nauseated by VR media? They aren’t abnormals. They’ve just been exposed to this extraneous deep visual information for more than 30 seconds. Nausea is still a common reaction, though dealt with as an aberration, whereas it’s simply a major issue with…

Whether it’s a dozen GoPros in a plastic rig for $3K, or a $45,000 HD Jaunt “proVR” camera, it’s the exact same thing: multiple cameras (sensors), recording multiple separate views, having to be stitched together into a 360 panorama. The issues with human viewer nausea with Oculus Rift were not with high-res CGI games, which had the same information (or more when 3D) as live recordings, but a continual seamless view. New auto-stitching programs are just a faster band-aid. We may not be conscious of visual “scar lines” left from stitching, but our visual cortex, processing these info “speed bumps” every time we move our head is what leads to user vomiting. These users aren’t defective outliers, they are simply active.

So this is why we focused on a consumer VR camera, but very different than anything else offered before:

- 8K high-resolution 3D

- Seamless (stitchless)

- Sphere video and audio

That could also be transmitted live and re-packed quickly so it could be distributed on the fly globally was the goal. But no one had done anything like this before. This we knew from seeing what was being done from the inside and seeing this land grab convince people there was one “bolt on” solution, just a matter of evolution and adaptation (versus a completely different medium requiring a completely different way to record).

As usual we looked to biology to find complex (visual) systems for design engineering inspiration.

Nature is neither an efficient, nor often a skillful engineer but over millions of years it can satisfice for successful adaptation. While we’ve studied visual systems from over a dozen different Orders, in addition to computer vision systems, we’d narrowed to two Genera and 14 Species for the closest system that fit our needs.

The Drosophila, which included the insect group commonly known as the dragonfly.

Dragonflies have the largest compound eyes of any insect, each containing thousands of facets, each pointing in a slightly different direction with its own own image path reassembled back into one picture; a dragonfly can see in all directions at the same time.

While a human cannot, we knew, in general we’d have to mimic a visual capture systems that could so that a viewer could turn to look in (almost) every direction to look and listen just as they can in the real world, capturing reality to play it back as virtual (real) reality.

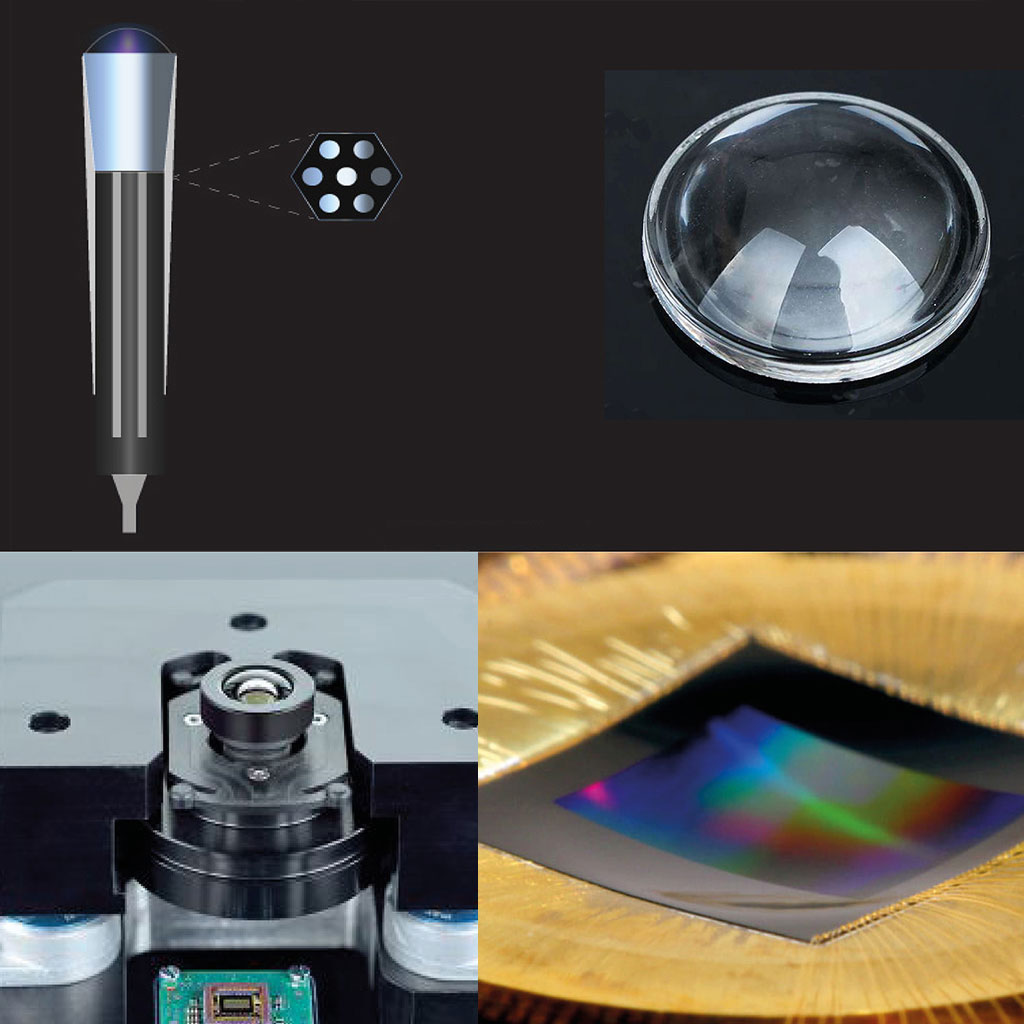

Unfortunately, it wasn’t as easy as creating a compound lens; we found we didn’t even need more than 6 actual lenses, if using asymmetrical lenses which—even covering over 120° (X/Y axes)—does not distort images such as a conventional wide-angle lens.

What was slightly more complex is every facet in a biological world may only have one path on an underlying sensor (like an optic nerve in biology), but it also had its own enclosed tube, like its own barrel of a lens to sensor, called an ommatidia.

Most insect eyes fall into one or other of two basic types, defined by the optics of image formation:

the apposition eye where the photoreceptors reside within the facets which are optically isolated from each other; and the superposition eye where the optical apparatus of the facets is separated from the array of photoreceptors by a clear zone with many facets acting together as a single optical device. Omnia uses a superpositioned “eye” structure.

Early working manufacturing prototype of the spherical sensor.

Precise 3D is done through bridge processor using inter-parallax available from sphere sample, which cannot be done with stereoscopic/dual channel rigs.